Docker 网络管理

docker容器创建后,通常需要和其它主机或容器进行网络通信

官方文档:

https://docs.docker.com/engine/network/

Docker的默认的网络通信

Docker安装后默认的网络设置

Docker服务安装完成之后,默认在每个宿主机会生成一个名称为docker0的网卡其IP地址都是 172.17.0.1/16

范例: 安装Docker的默认的网络配置

[root@ubuntu1804 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:34:df:91 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.100/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe34:df91/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state

DOWN group default

link/ether 02:42:02:7f:a8:c6 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:2ff:fe7f:a8c6/64 scope link

valid_lft forever preferred_lft forever

[root@ubuntu1804 ~]#brctl show

bridge name bridge id STP enabled interfaces

docker0 8000.0242027fa8c6 no

范例: 安装Harbor的默认网络配置

[root@ubuntu1804 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:01:f3:0c brd ff:ff:ff:ff:ff:ff

inet 10.0.0.102/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe01:f30c/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state

DOWN group default

link/ether 02:42:f4:23:e8:29 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

4: br-9af624ecd23e: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

state UP group default

link/ether 02:42:e9:1c:1a:7b brd ff:ff:ff:ff:ff:ff

inet 172.18.0.1/16 brd 172.18.255.255 scope global br-9af624ecd23e

valid_lft forever preferred_lft forever

inet6 fe80::42:e9ff:fe1c:1a7b/64 scope link

valid_lft forever preferred_lft forever

6: veth225895c@if5: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master br-9af624ecd23e state UP group default

link/ether a6:f3:0f:ae:4b:43 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet6 fe80::a4f3:fff:feae:4b43/64 scope link

valid_lft forever preferred_lft forever

8: veth244c237@if7: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master br-9af624ecd23e state UP group default

link/ether 72:12:35:11:e8:14 brd ff:ff:ff:ff:ff:ff link-netnsid 1

inet6 fe80::7012:35ff:fe11:e814/64 scope link

valid_lft forever preferred_lft forever

10: veth81ab8cb@if9: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master br-9af624ecd23e state UP group default

link/ether 5e:07:f2:eb:43:c2 brd ff:ff:ff:ff:ff:ff link-netnsid 2

inet6 fe80::5c07:f2ff:feeb:43c2/64 scope link

valid_lft forever preferred_lft forever

12: vethf8499d4@if11: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master br-9af624ecd23e state UP group default

link/ether 4e:df:12:c5:58:83 brd ff:ff:ff:ff:ff:ff link-netnsid 4

inet6 fe80::4cdf:12ff:fec5:5883/64 scope link

valid_lft forever preferred_lft forever

14: vethceabf74@if13: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master br-9af624ecd23e state UP group default

link/ether 06:c0:58:ea:51:2e brd ff:ff:ff:ff:ff:ff link-netnsid 5

inet6 fe80::4c0:58ff:feea:512e/64 scope link

valid_lft forever preferred_lft forever

16: veth47c5069@if15: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master br-9af624ecd23e state UP group default

link/ether c6:6f:aa:51:be:38 brd ff:ff:ff:ff:ff:ff link-netnsid 3

inet6 fe80::c46f:aaff:fe51:be38/64 scope link

valid_lft forever preferred_lft forever

18: veth83fde4a@if17: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master br-9af624ecd23e state UP group default

link/ether 32:74:1e:e2:81:50 brd ff:ff:ff:ff:ff:ff link-netnsid 6

inet6 fe80::3074:1eff:fee2:8150/64 scope link

valid_lft forever preferred_lft forever

20: veth2c51f87@if19: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master br-9af624ecd23e state UP group default

link/ether ca:b7:c9:da:87:92 brd ff:ff:ff:ff:ff:ff link-netnsid 7

inet6 fe80::c8b7:c9ff:feda:8792/64 scope link

valid_lft forever preferred_lft forever

22: veth0f4a931@if21: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master br-9af624ecd23e state UP group default

link/ether fa:29:a4:4d:b1:c2 brd ff:ff:ff:ff:ff:ff link-netnsid 8

inet6 fe80::f829:a4ff:fe4d:b1c2/64 scope link

valid_lft forever preferred_lft forever

24: veth55b6555@if23: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master br-9af624ecd23e state UP group default

link/ether aa:87:c4:2c:de:7c brd ff:ff:ff:ff:ff:ff link-netnsid 9

inet6 fe80::a887:c4ff:fe2c:de7c/64 scope link

valid_lft forever preferred_lft forever

[root@ubuntu1804 ~]#apt -y install bridge-utils

[root@ubuntu1804 ~]#brctl show

bridge name bridge id STP enabled interfaces

br-9af624ecd23e 8000.0242e91c1a7b no veth0f4a931

veth225895c

veth244c237

veth2c51f87

veth47c5069

veth55b6555

veth81ab8cb

veth83fde4a

vethceabf74

vethf8499d4

docker0 8000.0242f423e829 no

创建容器后的网络配置

每次新建容器后

- 宿主机多了一个虚拟网卡,和容器的网卡组合成一个网卡,比如: 137: veth8ca6d43@if136,而在容器内的网卡名为136,可以看出和宿主机的网卡之间的关联

- 容器会自动获取一个172.17.0.0/16网段的随机地址,默认从172.17.0.2开始分配给第1个容器使用,第2个容器为172.17.0.3,以此类推

- 容器获取的地址并不固定,每次容器重启,可能会发生地址变化

创建第一个容器后的网络状态

范例: 创建容器,容器自动获取IP地址

[root@ubuntu1804 ~]#docker run -it --rm alpine:3.11 sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

136: eth0@if137: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ # cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.17.0.2 6b8d9f3a653e

[root@ubuntu1804 ~]#docker ps

CONTAINER ID IMAGE COMMAND CREATED

STATUS PORTS NAMES

6b8d9f3a653e alpine:3.11 "sh" 13 seconds ago

Up 12 seconds pensive_chandrasekhar

范例: 新建第一个容器,宿主机的网卡多了一个新网卡

[root@ubuntu1804 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:34:df:91 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.100/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe34:df91/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP

group default

link/ether 02:42:02:7f:a8:c6 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:2ff:fe7f:a8c6/64 scope link

valid_lft forever preferred_lft forever

137: veth8ca6d43@if136: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master docker0 state UP group default

link/ether fa:96:37:77:a9:a9 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet6 fe80::f896:37ff:fe77:a9a9/64 scope link

valid_lft forever preferred_lft forever

范例: 查看新建容器后桥接状态

[root@ubuntu1804 ~]#brctl show

bridge name bridge id STP enabled interfaces

docker0 8000.0242027fa8c6 no veth8ca6d43

创建第二个容器后面的网络状态

范例: 再次创建第二个容器

[root@ubuntu1804 ~]#docker run -it --rm alpine:3.11 sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

140: eth0@if141: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:ac:11:00:03 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.3/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ # cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.17.0.3 ab3ea580804a

/ # ping ab3ea580804a

PING ab3ea580804a (172.17.0.3): 56 data bytes

64 bytes from 172.17.0.3: seq=0 ttl=64 time=0.037 ms

64 bytes from 172.17.0.3: seq=1 ttl=64 time=0.132 ms

^C

--- ab3ea580804a ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.037/0.084/0.132 ms

/ # ping 6b8d9f3a653e

ping: bad address '6b8d9f3a653e'

/ #

[root@ubuntu1804 ~]#docker ps

CONTAINER ID IMAGE COMMAND CREATED

STATUS PORTS NAMES

ab3ea580804a alpine:3.11 "sh" 9 seconds ago

Up 7 seconds vigilant_jones

6b8d9f3a653e alpine:3.11 "sh" 13 seconds ago

Up 12 seconds pensive_chandrasekhar

范例: 新建第二个容器后宿主机又多了一个虚拟网卡

[root@ubuntu1804 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:34:df:91 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.100/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe34:df91/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP

group default

link/ether 02:42:02:7f:a8:c6 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:2ff:fe7f:a8c6/64 scope link

valid_lft forever preferred_lft forever

137: veth8ca6d43@if136: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master docker0 state UP group default

link/ether fa:96:37:77:a9:a9 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet6 fe80::f896:37ff:fe77:a9a9/64 scope link

valid_lft forever preferred_lft forever

141: vethf599a47@if140: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master docker0 state UP group default

link/ether 96:e7:52:fe:67:54 brd ff:ff:ff:ff:ff:ff link-netnsid 1

inet6 fe80::94e7:52ff:fefe:6754/64 scope link

valid_lft forever preferred_lft forever

范例: 查看新建第二个容器后桥接状态

[root@ubuntu1804 ~]#brctl show

bridge name bridge id STP enabled interfaces

docker0 8000.0242027fa8c6 no veth8ca6d43

vethf599a47

容器间的通信

同一个宿主机的不同容器可相互通信

默认情况下

- 同一个宿主机的不同容器之间可以相互通信

dockerd --icc Enable inter-container communication (default true)

--icc=false #此配置可以禁止同一个宿主机的容器之间通信

- 不同宿主机之间的容器IP地址重复,默认不能相互通信

范例: 同一个宿主机的容器之间访问

[root@ubuntu1804 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:34:df:91 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.100/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe34:df91/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP

group default

link/ether 02:42:02:7f:a8:c6 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:2ff:fe7f:a8c6/64 scope link

valid_lft forever preferred_lft forever

137: veth8ca6d43@if136: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master docker0 state UP group default

link/ether fa:96:37:77:a9:a9 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet6 fe80::f896:37ff:fe77:a9a9/64 scope link

valid_lft forever preferred_lft forever

141: vethf599a47@if140: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

master docker0 state UP group default

link/ether 96:e7:52:fe:67:54 brd ff:ff:ff:ff:ff:ff link-netnsid 1

inet6 fe80::94e7:52ff:fefe:6754/64 scope link

valid_lft forever preferred_lft forever

[root@ubuntu1804 ~]#docker run -it --rm alpine:3.11 sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

136: eth0@if137: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ # ping 172.17.0.3

PING 172.17.0.3 (172.17.0.3): 56 data bytes

64 bytes from 172.17.0.3: seq=0 ttl=64 time=0.452 ms

64 bytes from 172.17.0.3: seq=1 ttl=64 time=0.190 ms

^C

--- 172.17.0.3 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.190/0.321/0.452 ms

/ # ping 172.17.0.1

PING 172.17.0.1 (172.17.0.1): 56 data bytes

64 bytes from 172.17.0.1: seq=0 ttl=64 time=0.139 ms

64 bytes from 172.17.0.1: seq=1 ttl=64 time=0.183 ms

^C

--- 172.17.0.1 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.139/0.161/0.183 ms

/ #

禁止同一个宿主机的不同容器间通信

范例: 同一个宿主机不同容器间禁止通信

#dockerd 的 --icc=false 选项可以禁止同一个宿主机的不同容器间通信

[root@ubuntu1804 ~]#vim /lib/systemd/system/docker.service

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --icc=false

[root@ubuntu1804 ~]#systemctl daemon-reload

[root@ubuntu1804 ~]#systemctl restart docker

#创建两个容器,测试无法通信

[root@ubuntu1804 ~]#docker run -it --name test1 --rm alpine sh

/ # hostname -i

172.17.0.2

[root@ubuntu1804 ~]#docker run -it --name test2 --rm alpine sh

/ # hostname -i

172.17.0.3

/ # ping 172.17.0.2

范例: 在第二个宿主机上创建容器,跨宿主机的容器之间默认不能通信

[root@ubuntu1804 ~]#docker pull alpine

Using default tag: latest

latest: Pulling from library/alpine

c9b1b535fdd9: Pull complete

Digest: sha256:ab00606a42621fb68f2ed6ad3c88be54397f981a7b70a79db3d1172b11c4367d

Status: Downloaded newer image for alpine:latest

docker.io/library/alpine:latest

[root@ubuntu1804 ~]#docker ps

CONTAINER ID IMAGE COMMAND CREATED

STATUS PORTS NAMES

cab76bbb3db2 alpine "sh" About a minute ago

Up About a minute jolly_rosalind

[root@ubuntu1804 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:6b:54:d3 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.101/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe6b:54d3/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state

DOWN group default

link/ether 02:42:1d:73:8b:71 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

[root@ubuntu1804 ~]#docker ps

CONTAINER ID IMAGE COMMAND CREATED

STATUS PORTS NAMES

[root@ubuntu1804 ~]#docker run -it --rm alpine sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

4: eth0@if5: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ # ping -c 1 172.17.0.3

PING 172.17.0.3 (172.17.0.3): 56 data bytes

--- 172.17.0.3 ping statistics ---

1 packets transmitted, 0 packets received, 100% packet loss

修改默认docker0网桥的网络配置

默认docker后会自动生成一个docker0的网桥,使用的IP是172.17.0.1/16,可能和宿主机的网段发生冲突,可以将其修改为其它网段的地址,避免冲突

范例: 将docker0的IP修改为指定IP

#方法1

[root@ubuntu1804 ~]#vim /etc/docker/daemon.json

[root@ubuntu1804 ~]#cat /etc/docker/daemon.json

{

"bip": "192.168.100.1/24",

"registry-mirrors": ["https://si7y70hh.mirror.aliyuncs.com"]

}

[root@ubuntu1804 ~]#systemctl restart docker.service

#方法2

[root@ubuntu1804 ~]#vim /lib/systemd/system/docker.service

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --bip=192.168.100.1/24

[root@ubuntu1804 ~]#systemctl daemon-reload

[root@ubuntu1804 ~]#systemctl restart docker.service

#注意两种方法不可混用,否则将无法启动docker服务

#验证结果

[root@ubuntu1804 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:48:f0:a2 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.100/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe48:f0a2/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state

DOWN group default

link/ether 02:42:c5:a7:cf:5c brd ff:ff:ff:ff:ff:ff

inet 192.168.100.1/24 brd 192.168.100.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:c5ff:fea7:cf5c/64 scope link

valid_lft forever preferred_lft forever

修改默认网络设置使用自定义网桥

新建容器默认使用docker0的网络配置,可以修改默认指向自定义的网桥网络

范例: 用自定义的网桥代替默认的docker0

#查看默认网络

[root@ubuntu1804 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:40:27:06 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.100/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe40:2706/64 scope link

valid_lft forever preferred_lft forever

5: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state

DOWN group default

link/ether 02:42:35:ba:e7:ce brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:35ff:feba:e7ce/64 scope link

valid_lft forever preferred_lft forever

[root@ubuntu1804 ~]#apt -y install bridge-utils

[root@ubuntu1804 ~]#brctl addbr br0

[root@ubuntu1804 ~]#ip a a 192.168.100.1/24 dev br0

[root@ubuntu1804 ~]#brctl show

bridge name bridge id STP enabled interfaces

br0 8000.000000000000 no

docker0 8000.024235bae7ce no

[root@ubuntu1804 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:40:27:06 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.100/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe40:2706/64 scope link

valid_lft forever preferred_lft forever

5: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state

DOWN group default

link/ether 02:42:35:ba:e7:ce brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:35ff:feba:e7ce/64 scope link

valid_lft forever preferred_lft forever

18: br0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN

group default qlen 1000

link/ether 00:00:00:00:00:00 brd ff:ff:ff:ff:ff:ff

inet 192.168.100.1/24 scope global br0

valid_lft forever preferred_lft forever

inet6 fe80::9cf2:e0ff:fe4e:96b3/64 scope link

valid_lft forever preferred_lft forever

[root@ubuntu1804 ~]#vim /lib/systemd/system/docker.service ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock -b br0

[root@ubuntu1804 ~]#systemctl daemon-reload

[root@ubuntu1804 ~]#systemctl restart docker

[root@ubuntu1804 ~]#ps -ef |grep dockerd

root 15773 1 0 11:12 ? 00:00:00 /usr/bin/dockerd -H fd:// --

containerd=/run/containerd/containerd.sock -b br0

root 15960 2300 0 11:14 pts/0 00:00:00 grep --color=auto dockerd

[root@ubuntu1804 ~]#docker run -it alpine sh

/ #hostname -i

192.168.100.2

/ # ping www.baidu.com

PING www.baidu.com (220.181.38.150): 56 data bytes

64 bytes from 220.181.38.150: seq=0 ttl=127 time=4.028 ms

[root@ubuntu1804 ~]#

容器名称互联

新建容器时,docker会自动分配容器名称,容器ID和IP地址,导致容器名称,容器ID和IP都不固定,那么如何区分不同的容器,实现和确定目标容器的通信呢?解决方案是给容器起个固定的名称,容器之间通过固定名称实现确定目标的通信

有两种固定名称:

- 容器名称

- 容器名称的别名

注意: 两种方式都最少需要两个容器才能实现

通过容器名称互联

容器名称介绍

即在同一个宿主机上的容器之间可以通过自定义的容器名称相互访问,比如: 一个业务前端静态页面是使用nginx,动态页面使用的是tomcat,另外还需要负载均衡调度器,如: haproxy 对请求调度至nginx和tomcat的容器,由于容器在启动的时候其内部IP地址是DHCP 随机分配的,而给容器起个固定的名称,则是相对比较固定的,因此比较适用于此场景

注意: 如果被引用的容器地址变化,必须重启当前容器才能生效

容器名称实现

docker run 创建容器,可使用--link选项实现容器名称的引用,其本质就是在容器内的/etc/hosts中添加--link后指定的容器的IP和主机名的对应关系,从而实现名称解析

-link list #Add link to another container

格式:

docker run --name <容器名称> #先创建指定名称的容器

docker run --link <目标通信的容器ID或容器名称> #再创建容器时引用上面容器的名称

实战案例1: 使用容器名称进行容器间通信

- 先创建第一个指定容器名称的容器

root@ubuntu1804 ~]#docker run -it --name server1 --rm alpine:3.11 sh

/ # cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.17.0.2 cdb5173003f5

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

150: eth0@if151: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ # ping 172.17.0.2

PING 172.17.0.2 (172.17.0.2): 56 data bytes

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.038 ms

^C

--- 172.17.0.2 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 0.038/0.038/0.038 ms

/ # ping server1

ping: bad address 'server1'

/ # ping cdb5173003f5

PING cdb5173003f5 (172.17.0.2): 56 data bytes

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.040 ms

64 bytes from 172.17.0.2: seq=1 ttl=64 time=0.128 ms

^C

--- cdb5173003f5 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.040/0.084/0.128 ms

/ #

- 新建第二个容器时引用第一个容器的名称

会自动将第一个主机的名称加入/etc/hosts文件,从而可以利用第一个容器名称进行访问

[root@ubuntu1804 ~]#docker run -it --rm --name server2 --link server1 alpine:3.11 sh

/ # env

HOSTNAME=395d8c3392ee

SHLVL=1

HOME=/root

TERM=xterm

SERVER1_NAME=/server2/server1

PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin

PWD=/

/ # cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.17.0.2 server1 cdb5173003f5

172.17.0.3 7ca466320980

/ # ping server1

PING server1 (172.17.0.2): 56 data bytes

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.111 ms

^C

--- server1 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 0.111/0.111/0.111 ms

/ # ping server2

ping: bad address 'server2'

/ # ping 7ca466320980

PING 7ca466320980 (172.17.0.3): 56 data bytes

64 bytes from 172.17.0.3: seq=0 ttl=64 time=0.116 ms

64 bytes from 172.17.0.3: seq=1 ttl=64 time=0.069 ms

^C

--- 7ca466320980 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.069/0.092/0.116 ms

/ # ping cdb5173003f5

PING cdb5173003f5 (172.17.0.2): 56 data bytes

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.072 ms

64 bytes from 172.17.0.2: seq=1 ttl=64 time=0.184 ms

^C

--- cdb5173003f5 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.072/0.128/0.184 ms

/ #

[root@ubuntu1804 ~]#docker ps

CONTAINER ID IMAGE COMMAND CREATED

STATUS PORTS NAMES

7ca466320980 alpine:3.11 "sh" 24 seconds ago

Up 23 seconds server2

cdb5173003f5 alpine:3.11 "sh" 7 minutes ago

Up 7 minutes server1

实战案例2: 实现 wordpress 和 MySQL 两个容器互连

[root@centos7 ~]#tree lamp_docker/

lamp_docker/

├── env_mysql.list

├── env_wordpress.list

└── mysql

└── mysql_test.cnf

[root@centos7 ~]#cat lamp_docker/env_mysql.list

MYSQL_ROOT_PASSWORD=123456

MYSQL_DATABASE=wordpress

MYSQL_USER=wpuser

MYSQL_PASSWORD=wppass

[root@centos7 ~]#cat lamp_docker/env_wordpress.list

WORDPRESS_DB_HOST=mysql:3306

WORDPRESS_DB_NAME=wordpress

WORDPRESS_DB_USER=wpuser

WORDPRESS_DB_PASSWORD=wppass

WORDPRESS_TABLE_PREFIX=wp_

[root@centos7 ~]#cat lamp_docker/mysql/mysql_test.cnf

[mysqld]

server-id=100

log-bin=mysql-bin

[root@centos7 ~]#docker run --name mysql -v /root/lamp_docker/mysql/:/etc/mysql/conf.d -v /data/mysql:/var/lib/mysql --env- file=/root/lamp_docker/env_mysql.list -d -p 3306:3306 mysql:8:0

[root@centos7 ~]#docker run -d --name wordpress --link mysql -v /data/wordpress:/var/www/html/wp-content --env-file=/root/lamp_docker/env_wordpress.list -p 80:80 wordpress:php7.4-apache

通过自定义容器别名互联

容器别名介绍

自定义的容器名称可能后期会发生变化,那么一旦名称发生变化,容器内程序之间也必须要随之发生变化,比如:程序通过固定的容器名称进行服务调用,但是容器名称发生变化之后再使用之前的名称肯定是无法成功调用,每次都进行更改的话又比较麻烦,因此可以使用自定义别名的方式解决,即容器名称可以随意更改,只要不更改别名即可

容器别名实现

命令格式

docker run --name <容器名称>

#先创建指定名称的容器

docker run --name <容器名称> --link <目标容器名称>:"<容器别名1> <容器别名2> ..."

#给上面创建的容器起别名,来创建新容器

实战案例: 使用容器别名

范例: 创建第三个容器,引用前面创建的容器,并起别名

[root@ubuntu1804 ~]#docker run -it --rm --name server3 --link server1:server1-alias alpine:3.11 sh

/ # env

HOSTNAME=395d8c3392ee

SHLVL=1

HOME=/root

TERM=xterm

SERVER1-ALIAS_NAME=/server3/server1-alias

PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin

PWD=/

/ # cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.17.0.2 server1-alias cdb5173003f5 server1

172.17.0.4 d9622c6831f7

/ # ping server1

PING server1 (172.17.0.2): 56 data bytes

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.101 ms

^C

--- server1 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 0.101/0.101/0.101 ms

/ # ping server1-alias

PING server1-alias (172.17.0.2): 56 data bytes

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.073 ms

^C

--- server1-alias ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 0.073/0.073/0.073 ms

/ #

范例: 创建第四个容器,引用前面创建的容器,并起多个别名

[root@ubuntu1804 ~]#docker run -it --rm --name server4 --link server1:"server1-alias1 server1-alias2" alpine:3.11 sh

/ # cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.17.0.2 server1-alias1 server1-alias2 cdb5173003f5 server1

172.17.0.5 db3d2f084c05

/ # ping server1

PING server1 (172.17.0.2): 56 data bytes

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.107 ms

^C

--- server1 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 0.107/0.107/0.107 ms

/ # ping server1-alias1

PING server1-alias1 (172.17.0.2): 56 data bytes

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.126 ms

^C

--- server1-alias1 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 0.126/0.126/0.126 ms

/ # ping server1-alias2

PING server1-alias2 (172.17.0.2): 56 data bytes

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.124 ms

^C

--- server1-alias2 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 0.124/0.124/0.124 ms

/ #

Docker 网络连接模式

网络模式介绍

Docker 的网络支持5种网络模式:

- none

- bridge

- container

- host

- network-name

范例: 查看默认的网络模式有三个

[root@ubuntu1804 ~]#docker network ls

NETWORK ID NAME DRIVER SCOPE

fe08e6d23c4c bridge bridge local

cb64aa83626c host host local

10619d45dcd4 none null local

网络模式指定

默认新建的容器使用Bridge模式,创建容器时,docker run 命令使用以下选项指定网络模式

docker run --network <mode>

docker run --net=<mode>

<mode>: 可是以下值

none

bridge

host

container:<容器名或容器ID>

<自定义网络名称>

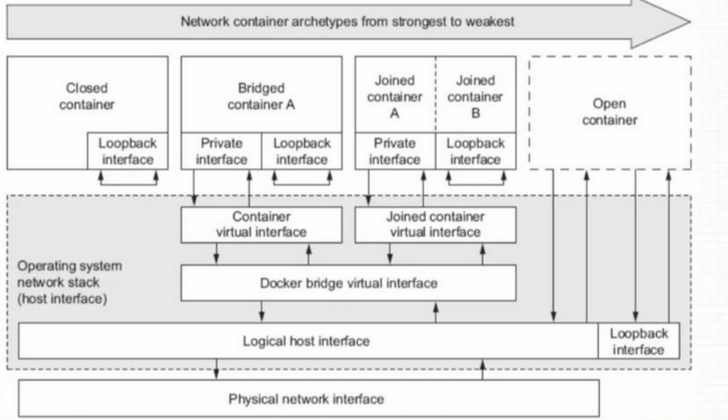

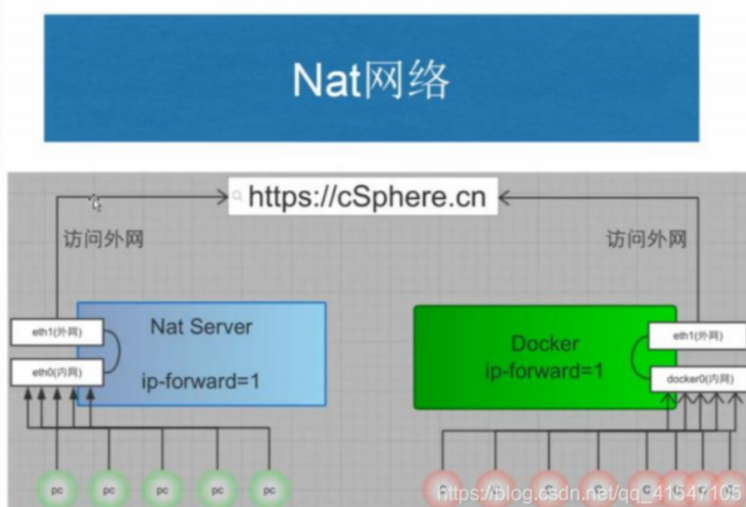

Bridge网络模式

Bridge 网络模式架构

本模式是docker的默认模式,即不指定任何模式就是bridge模式,也是使用比较多的模式,此模式创建的容器会为每一个容器分配自己的网络 IP 等信息,并将容器连接到一个虚拟网桥与外界通信

可以和外部网络之间进行通信,通过SNAT访问外网,使用DNAT可以让容器被外部主机访问,所以此模式也称为NAT模式

此模式宿主机需要启动ip_forward功能

bridge网络模式特点

- 网络资源隔离: 不同宿主机的容器无法直接通信,各自使用独立网络

- 无需手动配置: 容器默认自动获取172.17.0.0/16的IP地址,此地址可以修改

- 可访问外网: 利用宿主机的物理网卡,SNAT连接外网

- 外部主机无法直接访问容器: 可以通过配置DNAT接受外网的访问

- 低性能较低: 因为可通过NAT,网络转换带来更的损耗

- 端口管理繁琐: 每个容器必须手动指定唯一的端口,容器产生端口冲容

Bridge 模式的默认设置

范例: 查看bridge模式信息

[root@ubuntu1804 ~]#docker network inspect bridge

[

{

"Name": "bridge",

"Id":

"fe08e6d23c4c9de00bdab479446f136c09537a1551aa62ff2c95f8cfcabd6357",

"Created": "2020-01-31T16:11:32.718471804+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": null,

Config": [

{

"Subnet": "172.17.0.0/16",

"Gateway": "172.17.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Ingress": false,

"ConfigFrom": {

"Network": ""

},

"ConfigOnly": false,

"Containers": {

"cdb5173003f52033c7c8183994cf763d2a64ff39c431431402fd8dedf4727393":

{

"Name": "server1",

"EndpointID":

"6977fb6f74b75014513c34296f1e23ff0197f81f3209bbf7fcd39ba8e9f54c0d",

"MacAddress": "02:42:ac:11:00:02",

"IPv4Address": "172.17.0.2/16",

"IPv6Address": ""

}

},

"Options": {

"com.docker.network.bridge.default_bridge": "true",

"com.docker.network.bridge.enable_icc": "true",

"com.docker.network.bridge.enable_ip_masquerade": "true",

"com.docker.network.bridge.host_binding_ipv4": "0.0.0.0",

"com.docker.network.bridge.name": "docker0",

"com.docker.network.driver.mtu": "1500"

},

"Labels": {}

}

]

[root@ubuntu1804 ~]#

范例: 宿主机的网络状态

#安装docker后.默认启用ip_forward

[root@ubuntu1804 ~]#cat /proc/sys/net/ipv4/ip_forward

1

[root@ubuntu1804 ~]#iptables -vnL -t nat

Chain PREROUTING (policy ACCEPT 245 packets, 29850 bytes)

pkts bytes target prot opt in out source destination

11 636 DOCKER all -- * * 0.0.0.0/0 0.0.0.0/0

ADDRTYPE match dst-type LOCAL

Chain INPUT (policy ACCEPT 103 packets, 20705 bytes)

pkts bytes target prot opt in out source destination

Chain OUTPUT (policy ACCEPT 144 packets, 10324 bytes)

pkts bytes target prot opt in out source destination

0 0 DOCKER all -- * * 0.0.0.0/0 !127.0.0.0/8

ADDRTYPE match dst-type LOCAL

Chain POSTROUTING (policy ACCEPT 158 packets, 11500 bytes)

pkts bytes target prot opt in out source destination

125 7831 MASQUERADE all -- * !docker0 172.17.0.0/16 0.0.0.0/0

Chain DOCKER (2 references)

pkts bytes target prot opt in out source destination

2 168 RETURN all -- docker0 * 0.0.0.0/0 0.0.0.0/0

范例: 通过宿主机的物理网卡利用SNAT访问外部网络

#在另一台主机上建立httpd服务器

[root@centos7 ~]#systemctl is-active httpd

active

#启动容器,默认是bridge网络模式

[root@ubuntu1804 ~]#docker run -it --rm alpine:3.11 sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

166: eth0@if167: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

#可能访问其它宿主机

/ # ping 10.0.0.7

PING 10.0.0.7 (10.0.0.7): 56 data bytes

64 bytes from 10.0.0.7: seq=0 ttl=63 time=0.764 ms

64 bytes from 10.0.0.7: seq=1 ttl=63 time=1.147 ms

^C

--- 10.0.0.7 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.764/0.955/1.147 ms

/ # ping www.baidu.com

PING www.baidu.com (61.135.169.125): 56 data bytes

64 bytes from 61.135.169.125: seq=0 ttl=127 time=5.182 ms

^C

--- www.baidu.com ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 5.182/5.182/5.182 ms

/ # traceroute 10.0.0.7

traceroute to 10.0.0.7 (10.0.0.7), 30 hops max, 46 byte packets

1 172.17.0.1 (172.17.0.1) 0.008 ms 0.008 ms 0.007 ms

2 10.0.0.7 (10.0.0.7) 0.255 ms 0.510 ms 0.798 ms

/ # wget -qO - 10.0.0.7

Website on 10.0.0.7

/ # route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 172.17.0.1 0.0.0.0 UG 0 0 0 eth0

172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 eth0

[root@centos7 ~]#curl 127.0.0.1

Website on 10.0.0.7

[root@centos7 ~]#tail /var/log/httpd/access_log

127.0.0.1 - - [01/Feb/2020:19:31:16 +0800] "GET / HTTP/1.1" 200 20 "-"

"curl/7.29.0"

10.0.0.100 - - [01/Feb/2020:19:31:21 +0800] "GET / HTTP/1.1" 200 20 "-" "Wget"

修改默认的 Bridge 模式网络配置

有两种方法修改默认的bridge 模式的网络配置,但两种方式只能选一种,否则会导致冲容,docker服务无法启动

范例: 修改Bridge模式默认的网段方法1

[root@ubuntu1804 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:6b:54:d3 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.100/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe6b:54d3/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state

DOWN group default

link/ether 02:42:e0:ef:72:05 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:e0ff:feef:7205/64 scope link

valid_lft forever preferred_lft forever

[root@ubuntu1804 ~]#docker run -it --rm alpine sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

4: eth0@if5: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ #exit

#修改桥接地址

[root@ubuntu1804 ~]#vim /lib/systemd/system/docker.service

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --bip=10.100.0.1/24

[root@ubuntu1804 ~]#systemctl daemon-reload

[root@ubuntu1804 ~]#systemctl restart docker

[root@ubuntu1804 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:6b:54:d3 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.101/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe6b:54d3/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state

DOWN group default

link/ether 02:42:e0:ef:72:05 brd ff:ff:ff:ff:ff:ff

inet 10.100.0.1/24 brd 10.100.0.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:e0ff:feef:7205/64 scope link

valid_lft forever preferred_lft forever

[root@ubuntu1804 ~]#docker run -it --rm alpine sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

179: eth0@if180: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:0a:64:00:02 brd ff:ff:ff:ff:ff:ff

inet 10.100.0.2/24 brd 10.100.0.255 scope global eth0

valid_lft forever preferred_lft forever

/# exit

[root@ubuntu1804 ~]#docker network inspect bridge

[

{

"Name": "bridge",

"Id":

"ace11446c233d1fef534d9c734bf3ab5524afdbe76934a1a0e64803d03d54f98",

"Created": "2020-02-02T13:23:58.037630754+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": null,

"Config": [

{

"Subnet": "10.100.0.0/24",

"Gateway": "10.100.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Ingress": false,

"ConfigFrom": {

"Network": ""

},

"ConfigOnly": false,

"Containers": {},

"Options": {

"com.docker.network.bridge.default_bridge": "true",

"com.docker.network.bridge.enable_icc": "true",

"com.docker.network.bridge.enable_ip_masquerade": "true",

"com.docker.network.bridge.host_binding_ipv4": "0.0.0.0",

"com.docker.network.bridge.name": "docker0",

"com.docker.network.driver.mtu": "1500"

},

"Labels": {}

}

]

范例: 修改Bridge网络配置方法2

[root@ubuntu1804 ~]#vim /etc/docker/daemon.json

{

"hosts": ["tcp://0.0.0.0:2375", "fd://"],

"bip": "192.168.100.100/24", #分配docker0网卡的IP,24是容器IP的netmask

"fixed-cidr": "192.168.100.128/26", #分配容器IP范围,26不是容器IP的子网掩码,只表示地址

范围

"fixed-cidr-v6": "2001:db8::/64",

"mtu": 1500,

"default-gateway": "192.168.100.200", #网关必须和bip在同一个网段

"default-gateway-v6": "2001:db8:abcd::89",

"dns": [ "1.1.1.1", "8.8.8.8"]

}

[root@ubuntu1804 ~]#systemctl restart docker

[root@ubuntu1804 ~]#ip a show docker0

3: docker0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP

group default

link/ether 02:42:23:be:97:75 brd ff:ff:ff:ff:ff:ff

inet 192.168.100.100/24 brd 192.168.100.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:23ff:febe:9775/64 scope link

valid_lft forever preferred_lft forever

[root@ubuntu1804 ~]#docker run -it --name b1 busybox

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

36: eth0@if37: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:c0:a8:64:80 brd ff:ff:ff:ff:ff:ff

inet 192.168.100.128/24 brd 192.168.100.255 scope global eth0

valid_lft forever preferred_lft forever

/ # cat /etc/resolv.conf

search ayaka.com wang.org

nameserver 1.1.1.1

nameserver 8.8.8.8

/ # route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.100.200 0.0.0.0 UG 0 0 0 eth0

192.168.100.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

[root@ubuntu1804 ~]#docker network inspect bridge

[

{

"Name": "bridge",

"Id":

"381bc2df514b0901e2a7570708aa93a3af05f298f27d4d077b52a8b324fad66c",

"Created": "2020-07-27T21:58:31.419420569+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": null,

"Config": [

{

"Subnet": "192.168.100.0/24",

"IPRange": "192.168.100.128/26",

"Gateway": "192.168.100.100",

"AuxiliaryAddresses": {

"DefaultGatewayIPv4": "192.168.100.200"

}

},

{

"Subnet": "2001:db8::/64",

"AuxiliaryAddresses": {

"DefaultGatewayIPv6": "2001:db8:abcd::89"

}

}

]

},

"Internal": false,

"Attachable": false,

"Ingress": false,

"ConfigFrom": {

"Network": ""

},

"ConfigOnly": false,

"Containers": {

"2f16c9f5efc1eefe766f6ae6ba7fcfa3434e8f4876ecdcf48c3343acd9e45b2d":

{

"Name": "b1",

"EndpointID":

"0a0fdf3d786310dca53e04f0734b9f0eeaa79aac147c7a3c69ac8d04444570f3",

"MacAddress": "02:42:c0:a8:64:80",

"IPv4Address": "192.168.100.128/24",

"IPv6Address": ""

}

},

"Options": {

"com.docker.network.bridge.default_bridge": "true",

"com.docker.network.bridge.enable_icc": "true",

"com.docker.network.bridge.enable_ip_masquerade": "true",

"com.docker.network.bridge.host_binding_ipv4": "0.0.0.0",

"com.docker.network.bridge.name": "docker0",

"com.docker.network.driver.mtu": "1500"

},

"Labels": {}

}

]

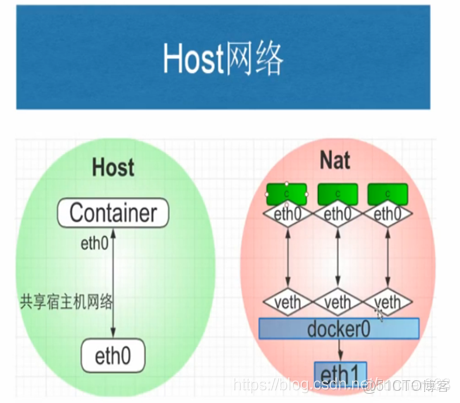

Host 模式

如果指定host模式启动的容器,那么新创建的容器不会创建自己的虚拟网卡,而是直接使用宿主机的网卡和IP地址,因此在容器里面查看到的IP信息就是宿主机的信息,访问容器的时候直接使用宿主机IP+容器端口即可,不过容器内除网络以外的其它资源,如: 文件系统、系统进程等仍然和宿主机保持隔离

此模式由于直接使用宿主机的网络无需转换,网络性能最高,但是各容器内使用的端口不能相同,适用于运行容器端口比较固定的业务

Host 网络模式特点:

- 使用参数 --network host 指定

- 共享宿主机网络

- 各容器网络无隔离

- 网络性能无损耗

- 网络故障排除相对简单

- 容易产生端口冲突

- 网络资源无法分别统计

- 不支持端口映射

范例:

#查看宿主机的网络设置

[root@ubuntu1804 ~]#ifconfig

docker0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.17.0.1 netmask 255.255.0.0 broadcast 172.17.255.255

inet6 fe80::42:2ff:fe7f:a8c6 prefixlen 64 scopeid 0x20<link>

ether 02:42:02:7f:a8:c6 txqueuelen 0 (Ethernet)

RX packets 63072 bytes 152573158 (152.5 MB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 56611 bytes 310696704 (310.6 MB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 10.0.0.100 netmask 255.255.255.0 broadcast 10.0.0.255

inet6 fe80::20c:29ff:fe34:df91 prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:34:df:91 txqueuelen 1000 (Ethernet)

RX packets 2029082 bytes 1200597401 (1.2 GB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 7272209 bytes 11576969391 (11.5 GB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10<host>

loop txqueuelen 1000 (Local Loopback)

RX packets 3533 bytes 320128 (320.1 KB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 3533 bytes 320128 (320.1 KB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@ubuntu1804 ~]#route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.2 0.0.0.0 UG 0 0 0 eth0

10.0.0.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 docker0

#打开容器前确认宿主机的80/tcp端口没有打开

[root@ubuntu1804 ~]#ss -ntl|grep :80

#创建host模式的容器

[root@ubuntu1804 ~]#docker run -d --network host --name web1 nginx-centos7-base:1.6.1

41fb5b8e41db26e63579a424df643d1f02e272dc75e76c11f4e313a443187ed1

#创建容器后,宿主机的80/tcp端口打开

[root@ubuntu1804 ~]#ss -ntlp|grep :80

LISTEN 0 128 0.0.0.0:80 0.0.0.0:*

users:(("nginx",pid=43762,fd=6),("nginx",pid=43737,fd=6))

#进入容器

[root@ubuntu1804 ~]#docker exec -it web1 bash

#进入容器后仍显示宿主机的主机名提示符信息

[root@ubuntu1804 /]# hostname

ubuntu1804.wang.org

[root@ubuntu1804 /]# ifconfig

docker0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.17.0.1 netmask 255.255.0.0 broadcast 172.17.255.255

inet6 fe80::42:2ff:fe7f:a8c6 prefixlen 64 scopeid 0x20<link>

ether 02:42:02:7f:a8:c6 txqueuelen 0 (Ethernet)

RX packets 63072 bytes 152573158 (145.5 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 56611 bytes 310696704 (296.3 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 10.0.0.100 netmask 255.255.255.0 broadcast 10.0.0.255

inet6 fe80::20c:29ff:fe34:df91 prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:34:df:91 txqueuelen 1000 (Ethernet)

RX packets 2028984 bytes 1200589212 (1.1 GiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 7272137 bytes 11576960933 (10.7 GiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10<host>

loop txqueuelen 1000 (Local Loopback)

RX packets 3533 bytes 320128 (312.6 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 3533 bytes 320128 (312.6 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@ubuntu1804 /]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.2 0.0.0.0 UG 0 0 0 eth0

10.0.0.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 docker0

#从容器访问远程主机

[root@ubuntu1804 /]# curl 10.0.0.7

Website on 10.0.0.7

#查看远程主机的访问日志

[root@centos7 ~]#tail -n1 /var/log/httpd/access_log

10.0.0.100 - - [01/Feb/2020:19:58:06 +0800] "GET / HTTP/1.1" 200 20 "-"

"curl/7.29.0"

#远程主机可以访问容器的web服务

[root@centos7 ~]#curl 10.0.0.100/app/

Test Page in app

范例: host模式下端口映射无法实现

[root@ubuntu1804 ~]#ss -ntl|grep :81

[root@ubuntu1804 ~]#docker run -d --network host --name web2 -p 81:80 nginx-centos7-base:1.6.1

WARNING: Published ports are discarded when using host network mode

6b6a910d79d94b188f719bc6ad00c274acd76a4a2929212157cd49b5219d44ae

#host模块下端口映射不成功,但是容器可以启动

[root@ubuntu1804 ~]#docker ps -a

CONTAINER ID IMAGE COMMAND CREATED

STATUS PORTS NAMES

6b6a910d79d9 nginx-centos7-base:1.6.1 "/apps/nginx/sbin/ng…" 6

seconds ago Exited (1) 2 seconds ago web2

b27c0fd28b40 nginx-centos7-base:1.6.1 "/apps/nginx/sbin/ng…" About a

minute ago Up About a minute web1

范例: 对比前面host模式的容器和bridge模式的端口映射

[root@ubuntu1804 ~]#docker port web1

[root@ubuntu1804 ~]#docker port web2

[root@ubuntu1804 ~]#docker run -d --network bridge -p 8001:80 --name web3 nginx-centos7-base:1.6.1

4095372b9a561704eac98ccef8041a80a2cdc2aa7b57d2798dec1a8dcb00c377

[root@ubuntu1804 ~]#docker port web3

80/tcp -> 0.0.0.0:8001

None 模式

在使用 none 模式后,Docker 容器不会进行任何网络配置,没有网卡、没有IP也没有路由,因此默认无法与外界通信,需要手动添加网卡配置IP等,所以极少使用

none模式特点

- 使用参数 --network none 指定

- 默认无网络功能,无法和外部通信

- 无法实现端口映射

- 适用于测试环境

范例: 启动none模式的容器

[root@ubuntu1804 ~]#docker run -d --network none -p 8001:80 --name web1-none nginx-centos7-base:1.6.1

5207dcbd0aeea88548819267d3751135e337035475cf3cd63a5e1be6599c0208

[root@ubuntu1804 ~]#docker ps

CONTAINER ID IMAGE COMMAND CREATED

STATUS PORTS NAMES

5207dcbd0aee nginx-centos7-base:1.6.1 "/apps/nginx/sbin/ng…" About a

minute ago Up About a minute web1-none

[root@ubuntu1804 ~]#docker port web1-none

[root@ubuntu1804 ~]#docker exec -it web1-none bash

[root@5207dcbd0aee /]# ifconfig -a

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

loop txqueuelen 1000 (Local Loopback)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@5207dcbd0aee /]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

[root@5207dcbd0aee /]# netstat -ntl

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State

tcp 0 0 0.0.0.0:80 0.0.0.0:* LISTEN

[root@5207dcbd0aee /]# ping www.baidu.com

ping: www.baidu.com: Name or service not known

[root@5207dcbd0aee /]# ping 172.17.0.1

connect: Network is unreachable

[root@5207dcbd0aee /]#

Container 模式

使用此模式创建的容器需指定和一个已经存在的容器共享一个网络,而不是和宿主机共享网络,新创建的容器不会创建自己的网卡也不会配置自己的IP,而是和一个被指定的已经存在的容器共享IP和端口范围,因此这个容器的端口不能和被指定容器的端口冲突,除了网络之外的文件系统、进程信息等仍然保持相互隔离,两个容器的进程可以通过lo网卡进行通信

Container 模式特点

- 使用参数 –-network container:名称或ID 指定

- 与宿主机网络空间隔离

- 容器间共享网络空间,直接使用对方的网络

- 第一个容器的网络可能是bridge,或none,或者host,而第二个容器模式依赖于第一个容器,它们共享网络

- 如果第一个容器停止,将导致无法创建第二个容器

- 第二个容器可以直接使用127.0.0.1访问第一个容器

- 适合频繁的容器间的网络通信

- 默认不支持端口映射,较少使用

范例: 通过容器模式实现 wordpress

[root@ubuntu2004 ~]#docker run -d -p 80:80 --name wordpress -v /data/wordpress:/var/www/html --restart=always wordpress:php7.4-apache

[root@ubuntu2004 ~]#docker run --network container:wordpress -e MYSQL_ROOT_PASSWORD=123456 -e MYSQL_DATABASE=wordpress -e MYSQL_USER=wordpress -e MYSQL_PASSWORD=123456 --name mysql -d -v /data/mysql:/var/lib/mysql --restart=always mysql:8.0.29-oracle

#注意:数据库主机地址为127.0.0.1,不支持localhost

范例:实现LNMP的Wordpress

#准备配置文件

[root@ubuntu2204 ~]#cat /data/nginx/conf.d/www.wang.org.conf

server {

listen 80;

server_name www.wang.org;

root /var/www/html;

index index.php index.html index.htm;

client_max_body_size 100m;

location ~ \.php$ {

root /var/www/html;

fastcgi_pass 127.0.0.1:9000;

fastcgi_index index.php;

fastcgi_param SCRIPT_FILENAME $document_root$fastcgi_script_name;

include fastcgi_params;

}

}

[root@ubuntu2204 ~]#docker run -d -p 80:80 --name wordpress -v /data/www:/var/www/html -v /data/nginx/conf.d/:/apps/nginx/conf/conf.d/ ayaka/nginx:1.24.0-alpine-3.18.0

[root@ubuntu2204 ~]#docker run --name php-fpm --network container:wordpress -d -v /data/www:/var/www/html wordpress:6.2.2-php8.0-fpm-alpine

[root@ubuntu2204 ~]#docker run --name mysql --network container:wordpress -e MYSQL_ROOT_PASSWORD=123456 -e MYSQL_DATABASE=wordpress -e MYSQL_USER=wordpress -e MYSQL_PASSWORD=123456 -d -v /data/mysql:/var/lib/mysql --restart=always mysql:8.0.29-oracle

#按下面浏览器访问初始化后,可以看到/data/www目录下自动生成文件

[root@ubuntu2204 ~]#ll -d /data/www/

drwxr-xr-x 5 82 82 4096 6月 17 21:20 /data/www//

[root@ubuntu2204 ~]#ll /data/www/

总计 256

drwxr-xr-x 5 82 82 4096 6月 17 21:20 ./

drwxr-xr-x 7 root root 4096 6月 17 21:17 ../

-rw-r--r-- 1 82 82 261 6月 15 16:15 .htaccess

-rw-r--r-- 1 82 82 405 2月 6 2020 index.php

-rw-r--r-- 1 82 82 19915 1月 1 08:06 license.txt

-rw-r--r-- 1 82 82 7402 3月 5 08:52 readme.html

-rw-r--r-- 1 82 82 7205 9月 17 2022 wp-activate.php

drwxr-xr-x 9 82 82 4096 5月 20 12:30 wp-admin/

-rw-r--r-- 1 82 82 351 2月 6 2020 wp-blog-header.php

-rw-r--r-- 1 82 82 2338 11月 10 2021 wp-comments-post.php

-rw-rw-r-- 1 82 82 5492 6月 15 16:14 wp-config-docker.php

-rw-rw-rw- 1 82 82 3292 6月 17 21:20 wp-config.php

-rw-r--r-- 1 82 82 3013 2月 23 18:38 wp-config-sample.php

drwxr-xr-x 7 82 82 4096 6月 17 21:22 wp-content/

-rw-r--r-- 1 82 82 5536 11月 23 2022 wp-cron.php

drwxr-xr-x 28 82 82 16384 5月 20 12:30 wp-includes/

-rw-r--r-- 1 82 82 2502 11月 27 2022 wp-links-opml.php

-rw-r--r-- 1 82 82 3792 2月 23 18:38 wp-load.php

-rw-r--r-- 1 82 82 49330 2月 23 18:38 wp-login.php

-rw-r--r-- 1 82 82 8541 2月 3 21:35 wp-mail.php

-rw-r--r-- 1 82 82 24993 3月 1 23:05 wp-settings.php

-rw-r--r-- 1 82 82 34350 9月 17 2022 wp-signup.php

-rw-r--r-- 1 82 82 4889 11月 23 2022 wp-trackback.php

-rw-r--r-- 1 82 82 3238 11月 29 2022 xmlrpc.php

范例: 通过容器模式实现LNP架构

#准备nginx连接php-fpm的配置文件

[root@ubuntu2004 ~]#cat /data/nginx/conf.d/php.conf

server {

listen 80;

server_name www.wang.org;

root /usr/share/nginx/html;

index index.php;

location ~ \.php$ {

root /usr/share/nginx/html;

fastcgi_pass 127.0.0.1:9000;

fastcgi_index index.php;

fastcgi_param SCRIPT_FILENAME $document_root$fastcgi_script_name;

include fastcgi_params;

}

}

#准备php的测试页文件

[root@ubuntu2004 ~]#cat /data/nginx/html/index.php

<?php

phpinfo();

?>

#启动nginx

[root@ubuntu2004 ~]#docker run -d --name nginx -v

/data/nginx/conf.d/:/etc/nginx/conf.d/ -v /data/nginx/html:/usr/share/nginx/html

-p 80:80 ayaka/nginx:1.20.0

#启动php-fpm

[root@ubuntu2004 ~]#docker run -d --network container:nginx --name php-fpm -v /data/nginx/html/:/usr/share/nginx/html php:8.1-fpm

#注意:php:8.1-fpm镜像缺少连接数据库的相关包,无法直接连接MySQL

范例:

#创建第一个容器

[root@ubuntu1804 ~]#docker run -it --name server1 -p 80:80 alpine:3.11 sh

/ # ifconfig

eth0 Link encap:Ethernet HWaddr 02:42:AC:11:00:02

inet addr:172.17.0.2 Bcast:172.17.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:9 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:766 (766.0 B) TX bytes:0 (0.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

/ # netstat -ntl

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State

/ #

#在另一个终端执行下面操作

[root@ubuntu1804 ~]#docker ps

CONTAINER ID IMAGE COMMAND CREATED

STATUS PORTS NAMES

4d342fac169f alpine:3.11 "sh" 29 seconds ago

Up 28 seconds 0.0.0.0:80->80/tcp server1

[root@ubuntu1804 ~]#docker port server1

80/tcp -> 0.0.0.0:80

#无法访问web服务

[root@ubuntu1804 ~]#curl 127.0.0.1/app/

curl: (52) Empty reply from server

#创建第二个容器,基于第一个容器的container的网络模式

[root@ubuntu1804 ~]#docker run -d --name server2 --network container:server1 nginx-centos7-base:1.6.1

#可以访问web服务

[root@ubuntu1804 ~]#curl 127.0.0.1/app/

Test Page in app

[root@ubuntu1804 ~]#docker exec -it server2 bash

#和第一个容器共享相同的网络

[root@4d342fac169f /]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.17.0.2 netmask 255.255.0.0 broadcast 172.17.255.255

ether 02:42:ac:11:00:02 txqueuelen 0 (Ethernet)

RX packets 29 bytes 2231 (2.1 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 12 bytes 1366 (1.3 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

loop txqueuelen 1000 (Local Loopback)

RX packets 10 bytes 860 (860.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 10 bytes 860 (860.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@4d342fac169f /]# netstat -ntl

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State

tcp 0 0 0.0.0.0:80 0.0.0.0:* LISTEN

#可访问外网

[root@4d342fac169f /]# ping www.baidu.com

PING www.a.shifen.com (61.135.169.121) 56(84) bytes of data.

64 bytes from 61.135.169.121 (61.135.169.121): icmp_seq=1 ttl=127 time=3.99 ms

64 bytes from 61.135.169.121 (61.135.169.121): icmp_seq=2 ttl=127 time=5.03 ms

^C

--- www.a.shifen.com ping statistics ---

2 packets transmitted, 2 received, 0% packet loss, time 1002ms

rtt min/avg/max/mdev = 3.999/4.514/5.030/0.519 ms

[root@4d342fac169f /]#

范例: 第一个容器使用host网络模式,第二个容器与之共享网络

[root@ubuntu1804 ~]#docker run -d --name c1 --network host nginx-centos7.8:v5.0-1.18.0

5a60804f3917d82dfe32db140411cf475f20acce0fe4674d94e4557e1003d8e0

[root@ubuntu1804 ~]#docker run -it --name c2 --network container:c1 centos7.8:v1.0

[root@ubuntu1804 /]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:63:8b:ac brd ff:ff:ff:ff:ff:ff

inet 10.0.0.100/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe63:8bac/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state

DOWN group default

link/ether 02:42:24:86:98:fb brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:24ff:fe86:98fb/64 scope link

valid_lft forever preferred_lft forever

[root@ubuntu1804 ~]#docker exec -it c1 bash

[root@ubuntu1804 /]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:63:8b:ac brd ff:ff:ff:ff:ff:ff

inet 10.0.0.100/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe63:8bac/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state

DOWN group default

link/ether 02:42:24:86:98:fb brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:24ff:fe86:98fb/64 scope link

valid_lft forever preferred_lft forever

[root@ubuntu1804 /]#

范例:第一个容器使用none网络模式,第二个容器与之共享网络

[root@ubuntu1804 ~]#docker run -d --name c1 --network none nginx-centos7.8:v5.0-1.18.0

caf5b57299c8359f21f30b8894c5f8496ff39b44ead6a732056000689cb0c91c

[root@ubuntu1804 ~]#docker run -it --name c2 --network container:c1 centos7.8:v1.0

[root@caf5b57299c8 /]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

[root@caf5b57299c8 /]#

自定义网络模式

除了以上的网络模式,也可以自定义网络,使用自定义的网段地址,网关等信息

可以使用自定义网络模式,实现不同集群应用的独立网络管理,而互不影响,而且在网一个网络内,可以直接利用容器名相互访问,非常便利

注意: 自定义网络内的容器可以直接通过容器名进行相互的访问,而无需使用 --link

自定义网络实现

[root@ubuntu1804 ~]#docker network --help

Usage: docker network COMMAND

Manage networks

Commands:

connect Connect a container to a network

create Create a network

disconnect Disconnect a container from a network

inspect Display detailed information on one or more networks

ls List networks

prune Remove all unused networks

rm Remove one or more networks

创建自定义网络

docker network create -d <mode> --subnet <CIDR> --gateway <网关> <自定义网络名称>

#注意mode不支持host和none,默认是bridge模式

-d <mode> 可省略,默认为bridge

查看自定义网络信息

docker network inspect <自定义网络名称或网络ID>

引用自定义网络

docker run --network <自定义网络名称> <镜像名称>

docker run --net <自定义网络名称> --ip <指定静态IP> <镜像名称>

#注意:静态IP只支持自定义网络模型

删除自定义网络

docker network rm <自定义网络名称或网络ID>

范例:内置的三个网络无法删除

[root@ubuntu1804 ~]#docker network rm test-net

test-net

[root@ubuntu1804 ~]#docker network rm none

Error response from daemon: none is a pre-defined network and cannot be removed

[root@ubuntu1804 ~]#docker network rm bridge

Error response from daemon: bridge is a pre-defined network and cannot be

removed

[root@ubuntu1804 ~]#docker network rm host

Error response from daemon: host is a pre-defined network and cannot be removed

实战案例: 自定义网络

创建自定义的网络

[root@ubuntu1804 ~]#docker network create -d bridge --subnet 172.27.0.0/16 --gateway 172.27.0.1 test-net

root@ubuntu1804 ~]#docker network ls

NETWORK ID NAME DRIVER SCOPE

cabde0b33c94 bridge bridge local

cb64aa83626c host host local

10619d45dcd4 none null local

c90dee3b7937 test-net bridge local

[root@ubuntu1804 ~]#docker inspect test-net

[

{

"Name": "test-net",

"Id":

"00ab0f2d29e82d387755e1bea19532dc279fa134a565e496d308ec62f7edf434",

"Created": "2020-07-22T09:59:09.431393706+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": {},

"Config": [

{

"Subnet": "172.27.0.0/16",

"Gateway": "172.27.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Ingress": false,

"ConfigFrom": {

"Network": ""

},

"ConfigOnly": false,

"Containers": {},

"Options": {},

"Labels": {}

}

]

[root@ubuntu1804 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:34:df:91 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.100/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe34:df91/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state

DOWN group default

link/ether 02:42:9b:31:73:2b brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:9bff:fe31:732b/64 scope link

valid_lft forever preferred_lft forever

#新添加了一个虚拟网卡

14: br-c90dee3b7937: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue

state DOWN group default

link/ether 02:42:58:7c:f0:93 brd ff:ff:ff:ff:ff:ff

inet 172.27.0.1/16 brd 172.27.255.255 scope global br-c90dee3b7937

valid_lft forever preferred_lft forever

inet6 fe80::42:58ff:fe7c:f093/64 scope link

valid_lft forever preferred_lft forever

#新加了一个网桥

[root@ubuntu1804 ~]#brctl show

bridge name bridge id STP enabled interfaces

br-00ab0f2d29e8 8000.024245e647ec no

docker0 8000.0242cfd26f0a no

[root@ubuntu1804 ~]#route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.2 0.0.0.0 UG 0 0 0 eth0

10.0.0.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 docker0

172.27.0.0 0.0.0.0 255.255.0.0 U 0 0 0 br-

c90dee3b7937

利用自定义的网络创建容器

[root@ubuntu1804 ~]#docker run -it --rm --network test-net alpine sh

# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

15: eth0@if16: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:ac:1b:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.27.0.2/16 brd 172.27.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ # route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 172.27.0.1 0.0.0.0 UG 0 0 0 eth0

172.27.0.0 0.0.0.0 255.255.0.0 U 0 0 0 eth0

# cat /etc/resolv.conf

search ayakaa.com wang.org

nameserver 127.0.0.11

options ndots:0

/ # ping -c1 www.baidu.com

PING www.baidu.com (111.206.223.172): 56 data bytes

64 bytes from 111.206.223.172: seq=0 ttl=127 time=5.053 ms

#再开一个新终端窗口查看网络

[root@ubuntu1804 ~]#docker inspect test-net

[

{

"Name": "test-net",

"Id":

"00ab0f2d29e82d387755e1bea19532dc279fa134a565e496d308ec62f7edf434",

"Created": "2020-07-22T09:59:09.431393706+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": {},

"Config": [

{

"Subnet": "172.27.0.0/16",

"Gateway": "172.27.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Ingress": false,

"ConfigFrom": {

"Network": ""

},

"ConfigOnly": false,

#出现此网络中容器的网络信息

"Containers": {

"89e54ed71c111ac7b41a62ce20191707cf53a3a234ba3e25ac11c1a4a6bed7ef":

{

"Name": "frosty_ellis",

"EndpointID":

"cf72bf192df73a8b290d8b18dd8507fef64a1f9480d4d65f74c23258d20dbafb",

"MacAddress": "02:42:ac:1b:00:02",

"IPv4Address": "172.27.0.2/16",

"IPv6Address": ""

}

},

"Options": {},

"Labels": {}

}

]

实战案例: 自定义网络中的容器之间通信

[root@ubuntu1804 ~]#docker network ls

NETWORK ID NAME DRIVER SCOPE

c2f770f19400 bridge bridge local

220d4008a6a0 host host local

adb6f338ff6d none null local

00ab0f2d29e8 test-net bridge local

[root@ubuntu1804 ~]#docker run -it --rm --network test-net --name test1 alpine sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

23: eth0@if24: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:ac:1b:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.27.0.2/16 brd 172.27.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ # cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.27.0.2 c3446876a38

#等后面步骤中容器test2创建好可以再访问

/ # ping -c1 test2

PING test2 (172.27.0.3): 56 data bytes

64 bytes from 172.27.0.3: seq=0 ttl=64 time=0.072 ms

[root@ubuntu1804 ~]#docker run -it --rm --network test-net --name test2 alpine

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

25: eth0@if26: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:ac:1b:00:03 brd ff:ff:ff:ff:ff:ff

inet 172.27.0.3/16 brd 172.27.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ # cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.27.0.3 305fd14a4b70

/ # ping -c1 test1

PING test1 (172.27.0.2): 56 data bytes

64 bytes from 172.27.0.2: seq=0 ttl=64 time=0.074 ms

--- test1 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 0.074/0.074/0.074 ms

同一个宿主机之间不同网络的容器通信

开两个容器,一个使用自定义网络容器,一个使用默认brideg网络的容器,默认因iptables规则导致无法通信

[root@ubuntu1804 ~]#docker run -it --rm --name test1 alpine sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

23: eth0@if24: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ # ping 172.27.0.2 #无法ping通自定义网络容器

PING 172.27.0.2 (172.27.0.2): 56 data bytes

[root@ubuntu1804 ~]#docker run -it --rm --network test-net --name test2 alpine sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

21: eth0@if22: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

state UP

link/ether 02:42:ac:1b:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.27.0.2/16 brd 172.27.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ # ping 172.17.0.2 #无法ping 通默认的网络容器

PING 172.27.0.2 (172.17.0.2): 56 data bytes

实战案例 1: 修改iptables实现同一宿主机上的不同网络的容器间通信

#确认开启ip_forward

[root@ubuntu1804 ~]#cat /proc/sys/net/ipv4/ip_forward

1

#默认网络和自定义网络是两个不同的网桥

[root@ubuntu1804 ~]#brctl show

bridge name bridge id STP enabled interfaces

br-c90dee3b7937 8000.0242587cf093 no veth984a5b4

docker0 8000.02429b31732b no veth1a20128

[root@ubuntu1804 ~]#iptables -vnL

Chain INPUT (policy ACCEPT 1241 packets, 87490 bytes)

pkts bytes target prot opt in out source destination

Chain FORWARD (policy DROP 0 packets, 0 bytes)

pkts bytes target prot opt in out source destination

859 72156 DOCKER-USER all -- * * 0.0.0.0/0 0.0.0.0/0

859 72156 DOCKER-ISOLATION-STAGE-1 all -- * * 0.0.0.0/0

0.0.0.0/0

0 0 ACCEPT all -- * br-c90dee3b7937 0.0.0.0/0

0.0.0.0/0 ctstate RELATED,ESTABLISHED

0 0 DOCKER all -- * br-c90dee3b7937 0.0.0.0/0

0.0.0.0/0

0 0 ACCEPT all -- br-c90dee3b7937 !br-c90dee3b7937 0.0.0.0/0

0.0.0.0/0

0 0 ACCEPT all -- br-c90dee3b7937 br-c90dee3b7937 0.0.0.0/0

0.0.0.0/0

0 0 ACCEPT all -- * docker0 0.0.0.0/0 0.0.0.0/0

ctstate RELATED,ESTABLISHED

0 0 DOCKER all -- * docker0 0.0.0.0/0 0.0.0.0/0

0 0 ACCEPT all -- docker0 !docker0 0.0.0.0/0 0.0.0.0/0

0 0 ACCEPT all -- docker0 docker0 0.0.0.0/0 0.0.0.0/0

Chain OUTPUT (policy ACCEPT 1456 packets, 209K bytes)

pkts bytes target prot opt in out source destination

Chain DOCKER (2 references)

pkts bytes target prot opt in out source destination

Chain DOCKER-ISOLATION-STAGE-1 (1 references)

pkts bytes target prot opt in out source destination

289 24276 DOCKER-ISOLATION-STAGE-2 all -- br-c90dee3b7937 !br-c90dee3b7937

0.0.0.0/0 0.0.0.0/0

570 47880 DOCKER-ISOLATION-STAGE-2 all -- docker0 !docker0 0.0.0.0/0

0.0.0.0/0

。。。。。。。

Chain DOCKER-USER (1 references)

pkts bytes target prot opt in out source destination

859 72156 RETURN all -- * * 0.0.0.0/0 0.0.0.0/0

[root@ubuntu1804 ~]#iptables-save

# Generated by iptables-save v1.6.1 on Sun Feb 2 14:33:19 2020

*filter

:INPUT ACCEPT [1283:90246]

:FORWARD DROP [0:0]

:OUTPUT ACCEPT [1489:217126]

:DOCKER - [0:0]

:DOCKER-ISOLATION-STAGE-1 - [0:0]

:DOCKER-ISOLATION-STAGE-2 - [0:0]

:DOCKER-USER - [0:0]

-A FORWARD -j DOCKER-USER

-A FORWARD -j DOCKER-ISOLATION-STAGE-1

-A FORWARD -o br-c90dee3b7937 -m conntrack --ctstate RELATED,ESTABLISHED -j

ACCEPT

-A FORWARD -o br-c90dee3b7937 -j DOCKER

-A FORWARD -i br-c90dee3b7937 ! -o br-c90dee3b7937 -j ACCEPT

-A FORWARD -i br-c90dee3b7937 -o br-c90dee3b7937 -j ACCEPT

-A FORWARD -o docker0 -m conntrack --ctstate RELATED,ESTABLISHED -j ACCEPT

-A FORWARD -o docker0 -j DOCKER

-A FORWARD -i docker0 ! -o docker0 -j ACCEPT

-A FORWARD -i docker0 -o docker0 -j ACCEPT

-A DOCKER-ISOLATION-STAGE-1 -i br-c90dee3b7937 ! -o br-c90dee3b7937 -j DOCKER-

ISOLATION-STAGE-2

-A DOCKER-ISOLATION-STAGE-1 -i docker0 ! -o docker0 -j DOCKER-ISOLATION-STAGE-2

-A DOCKER-ISOLATION-STAGE-1 -j RETURN

-A DOCKER-ISOLATION-STAGE-2 -o br-c90dee3b7937 -j DROP #注意此行规则

-A DOCKER-ISOLATION-STAGE-2 -o docker0 -j DROP #注意此行规则

-A DOCKER-ISOLATION-STAGE-2 -j RETURN

-A DOCKER-USER -j RETURN

COMMIT

# Completed on Sun Feb 2 14:33:19 2020